▶ Table of Contents

When it comes to Technical SEO, one of the most fundamental components for any website is ensuring that search engines can find, crawl, and index your content. For travel companies, this is especially crucial, as a significant portion of the business relies on organic search traffic. Without proper crawlability and indexability, even the most well-optimized content and marketing efforts could go unnoticed by search engines.

At Wander Women Strategies, we understand the importance of these technical elements for travel businesses. In this article, we’ll explore what crawlability and indexability mean, how you can perform an audit to ensure both are functioning optimally, and why this is so important for travel websites.

What is Crawlability and Indexability Review?

Before diving into how to assess these elements, it’s essential to understand what crawlability and indexability mean in the context of SEO.

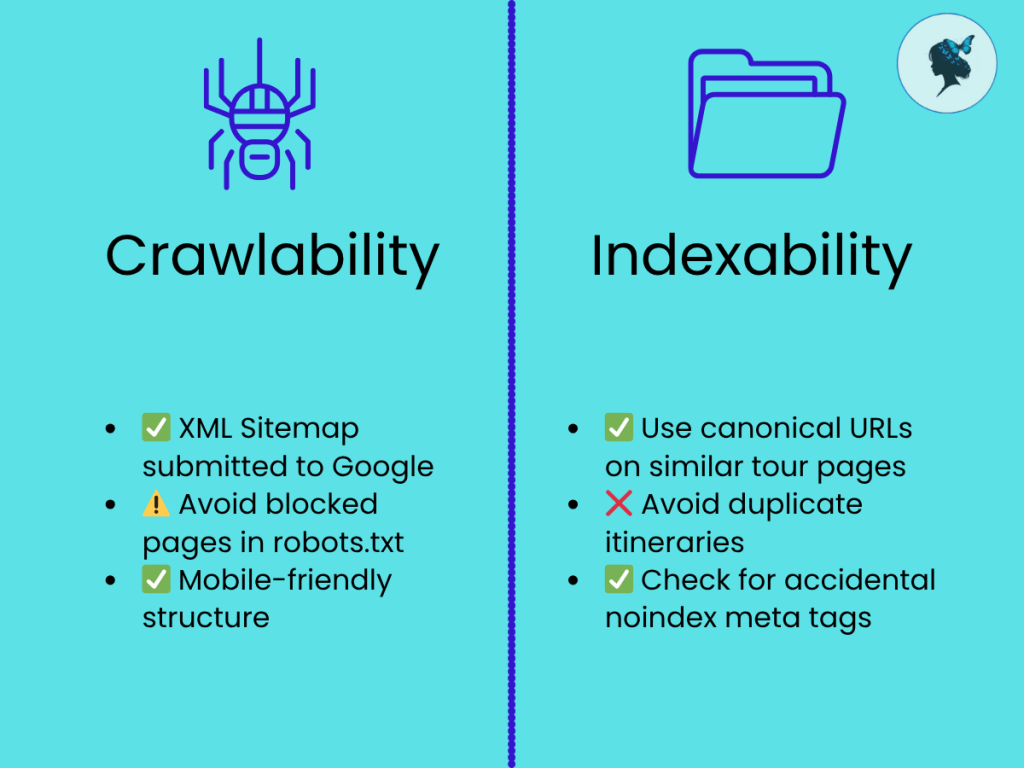

Crawlability

Crawlability refers to a website’s ability to be discovered by search engine bots (such as Googlebot). These bots are responsible for exploring the internet, gathering content, and storing it in search engine databases. If a search engine can’t crawl your website, it won’t be able to retrieve your content to show in the search results.

For a travel website, ensuring crawlability is critical for getting your pages indexed and showing up for relevant search queries, whether for hotel bookings, flight comparisons, or travel experiences.

Indexability

Indexability is closely tied to crawlability but takes it one step further. Once search engine bots crawl a website, they analyze the content and decide whether to include it in the search engine’s index. The index is essentially the database of all content that search engines display in their results pages. A page may be crawled but not indexed if it’s deemed irrelevant, duplicated, or otherwise not suitable for indexing.

For travel companies, it’s essential that all key pages—like booking forms, destination pages, and hotel listings—are not just crawled but also indexed properly, so potential customers can find them via search engines.

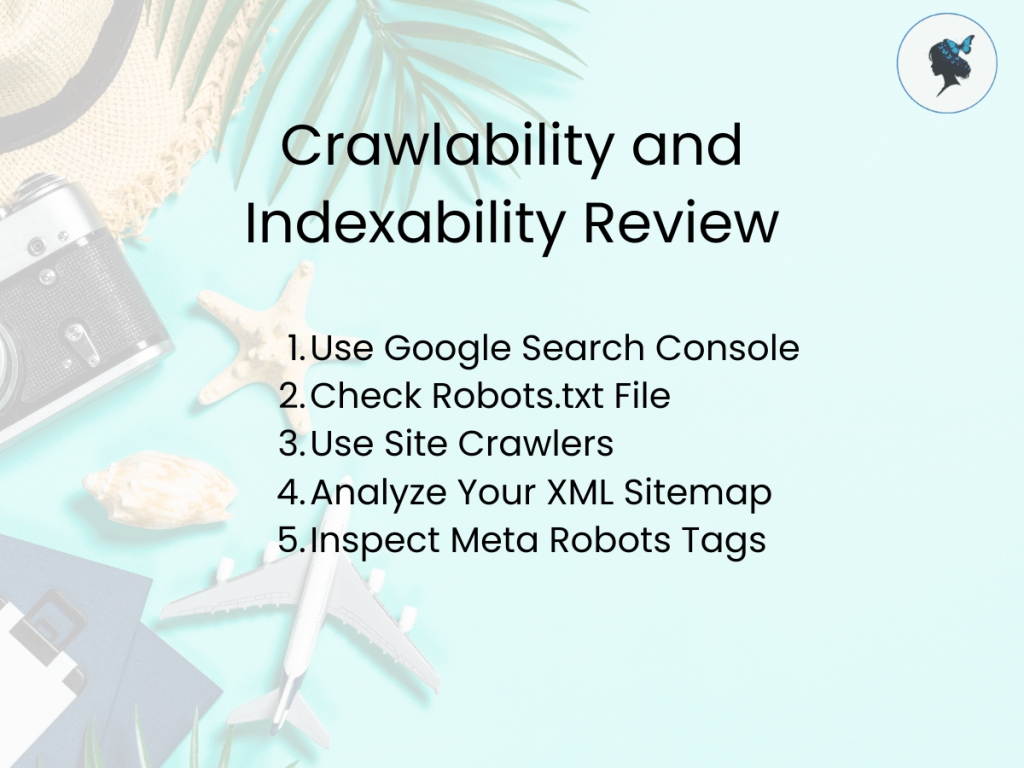

How to Perform a Crawlability and Indexability Review

Performing a crawlability and indexability review involves using several tools and techniques to ensure that search engine bots can easily crawl and index your website. Here’s a step-by-step guide on how to conduct this review:

1. Use Google Search Console

Google Search Console is one of the most valuable tools for reviewing crawlability and indexability. It provides direct insight from Google on how they view your website.

How to Use Google Search Console:

- Crawl Errors: Go to the “pages” section in Google Search Console to see any crawl errors. These errors may include pages that Googlebot can’t reach due to issues like broken links, server errors, or issues with your site’s robots.txt file.

- Sitemap Submission: Ensure that your sitemap is submitted to Google Search Console and includes all important pages. This makes it easier for Googlebot to crawl your site efficiently.

- URL Inspection Tool: Use this tool to inspect the status of specific pages. You can check whether the page is crawled and indexed and diagnose any potential issues preventing indexing.

2. Check Robots.txt File

Your robots.txt file instructs search engine bots on which pages they are allowed to crawl and which pages to avoid. This file is critical for managing the crawlability of your website, especially for preventing search engines from crawling duplicate content or internal pages that don’t need to be indexed (like login pages or thank-you pages).

How to Review:

- Ensure Important Pages Are Accessible: Check that your robots.txt file is not blocking important pages from being crawled. For example, a travel company might want to block admin or checkout pages but should allow product or destination pages to be crawled.

- Avoid Disallowing High-Value Pages: Sometimes, poorly configured robots.txt files accidentally block key pages, which means Google can’t crawl or index them. Be careful not to block pages that could help improve your search rankings.

3. Use Site Crawlers

Several SEO tools can crawl your website and identify issues that could hinder crawlability and indexability. Tools like Screaming Frog, Semrush, and Ahrefs offer in-depth crawling functionality to identify crawl issues.

How to Use Site Crawlers:

- Crawl Your Site: Use a site crawler like Screaming Frog to identify pages that are not being crawled. It will also show you any redirects, broken links, and other issues that could affect crawlability.

- Check for Duplicates: Site crawlers also help detect duplicate content on your site, which can hurt indexability. In the travel industry, this often happens with duplicate hotel listings, destination pages, or content that is copied from other sites.

4. Analyze Your XML Sitemap

An XML sitemap is a file that lists all of the important pages of your website. It’s essentially a roadmap for search engines, telling them which pages are most important to crawl. It’s essential that your sitemap is complete and up-to-date to maximize crawlability.

How to Review:

- Ensure it’s Comprehensive: The XML sitemap should include all pages you want indexed by search engines, such as blog posts, service pages, and booking pages.

- Submit to Google Search Console: Submit your updated sitemap through Google Search Console to ensure search engines can crawl and index all the necessary pages.

- Remove Low-Value Pages: If you have pages with little or no SEO value (like privacy policy pages), remove them from the sitemap.

5. Inspect Meta Robots Tags

Meta robots tags are HTML elements that provide instructions to search engine crawlers about whether to index or follow a particular page. If these tags are not set correctly, it could prevent pages from being indexed.

How to Review:

- Check for “noindex” Tags: Ensure that important pages are not mistakenly set with the “noindex” directive in their meta tags. This would prevent search engines from indexing the page.

- Check for “nofollow” Tags: Similarly, check for “nofollow” tags that may prevent search engines from following valuable links on your pages.

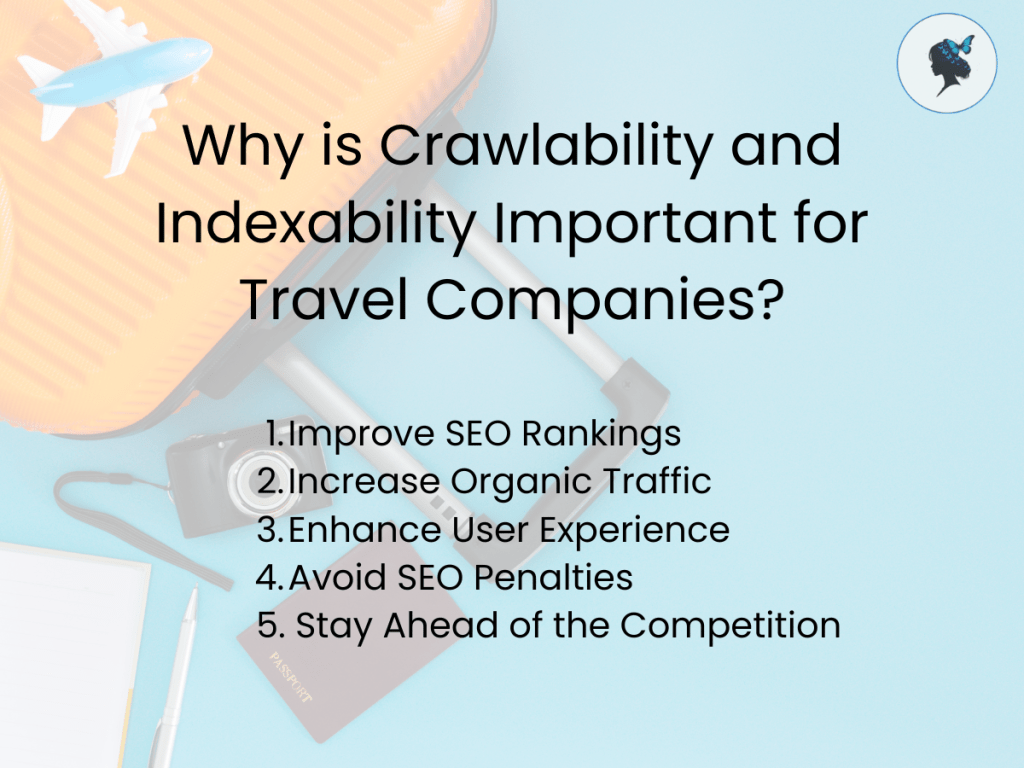

Why is Crawlability and Indexability Important for Travel Companies?

Improve SEO Rankings

Without crawlability and indexability, search engines can’t discover and rank your pages. If your travel website’s pages aren’t being indexed, they won’t appear in search results, and potential customers won’t find you.

Increase Organic Traffic

As a travel company, your customers are most likely searching for destinations, flights, accommodations, and activities via search engines. Ensuring your pages are crawlable and indexable directly affects your organic search traffic, which is often one of the top sources of traffic for travel businesses.

Enhance User Experience

When your website is crawlable and all content is indexed properly, it ensures that users can find what they’re looking for easily and efficiently. This not only boosts SEO but also leads to higher user satisfaction, which can convert visitors into customers.

Avoid SEO Penalties

Incorrectly blocking or de-indexing important pages, or allowing search engines to crawl duplicate content, can result in penalties from Google. These penalties can significantly impact your rankings and traffic, making it harder to compete with other travel companies.

Stay Ahead of the Competition

In the travel industry, competition is fierce. By ensuring your website is fully optimized for crawlability and indexability, you can rank higher in search results, increasing your visibility and staying ahead of your competitors.

Contact Wander Women Strategies today to schedule your Crawlability and Indexability Review. Let us help your travel website soar in search rankings and attract more visitors!